Bypassing ID.me AI Identity Verification, costing $3.4 million

Case Study Number, AISec-0003/24

Summary

An individual in California exploited ID.me's identity verification flaws to file 180 fraudulent unemployment claims, obtaining over $3.4 million by using fake IDs and wigs for false verifications, and was eventually sentenced to nearly seven years for wire fraud and aggravated identity theft.

Threat Capability Level, Productionised and Deployed: TRL9

Primary Threat Vector, Bypassing AI Verification

Date, October 2020

Reporter, ID.me internal investigation

Actor, One individual

Target, California Employment Development Department

Risk, High Risk

Country, USA

Incident Detail

An individual submitted at least 180 fraudulent unemployment claims in California between October 2020 and December 2021 by circumventing the automated identity verification system of ID.me. Dozens of these deceptive claims were approved, resulting in the individual receiving payments totalling at least $3.4 million.

The fraudster amassed several real identities and procured counterfeit driver's licenses with the stolen personal details, complemented by photos of himself wearing different wigs. He then registered accounts on ID.me and completed the identity verification process, which checks personal details and confirms identity by matching the ID photo with a selfie; he successfully verified the stolen identities by wearing corresponding wigs in his selfies.

The claims were filed with the California Employment Development Department (EDD) under these verified identities. Exploiting vulnerabilities in ID.me's system at the time, the counterfeit licenses were mistakenly approved.

The fraudster was eventually sentenced to nearly seven years in federal prison for wire fraud and aggravated identity theft.

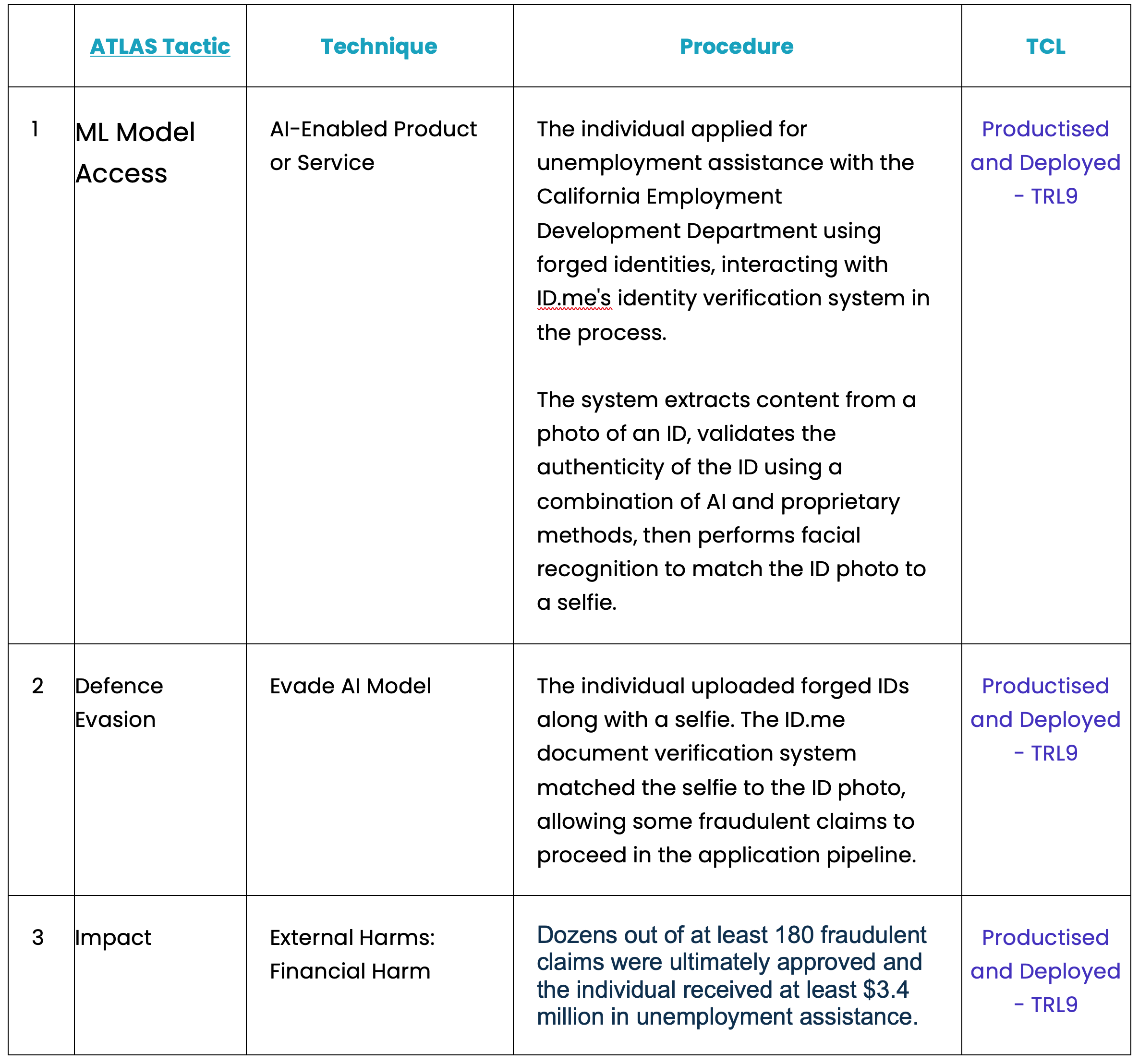

Tactics, Techniques, and Procedures

This case exemplifies the vulnerability of AI-based identity verification systems to physical disguise techniques combined with counterfeit documents. The attacker exploited the gap between the AI system's pattern-matching capability and its inability to detect high-quality physical forgeries.

Mitigations

- Undertake an AI security assessment.

- Catalogue your AI infrastructure assets (hardware and software).

- Employ a Secure by Design methodology for the development of your AI products and services.

- Gain a comprehensive understanding of your supply chain and construct your AI Bill of Materials (AIBOM).

- Maintain an Open Source Intelligence (OSINT) feed to stay abreast of emerging AI threat vectors.

- Autonomously track, prioritise, and document your vulnerabilities, there are too many for humans to do manually.

- Utilise a quantitative risk management strategy that justifies investment returns of your control measures.